The Myth of “Minority”

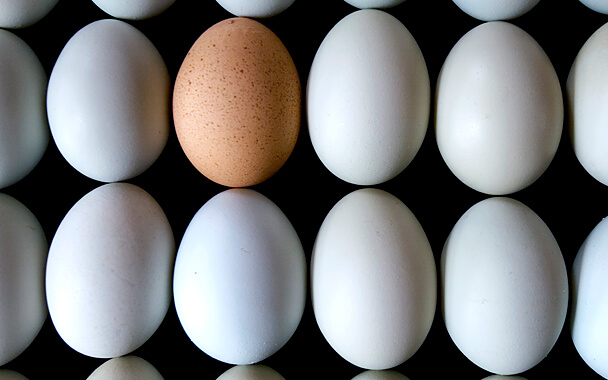

The Atlantic released a report yesterday that this year is the first in which “minorities will outnumber white students” in US public schools. It’s apparent what they mean, but the oxymoron speaks volumes about the way we think and speak about race in the US—that is, always in relation to whiteness. Use of the term “minority” to mean “non-white” is so widespread that even if we could excise it, it’s not likely we would find a suitable general term to encapsulate the sheer diversity of race and ethnicity in the United States. In a sense, only two races factor into the mainstream American conversation on race: white and non-white.

The term “minority” is a misnomer at best, and one applied too narrowly. Since at least 2011, New York City has been a “majority minority” city, alongside more than twenty other major metropolitan areas, including nine of the ten most populous U.S. cities. The semantics of “majority minority” are bizarre and tautological: “non-Hispanic whites” (henceforth to be referenced simply as “white”) are a minority in these cities, as is every other racial or ethnic group—meaning every group is a minority, technically.

And yet cities with no racial or ethnic majority are not described as “total minority” cities, or “non-majority” cities; the rhetorical bait-and-switch of “majority minority” seems to mean “a majority of minority groups,” but the fact that the designation is assigned once the white population dips below 50 percent of the total population renders the real meaning closer to “majority minority”—in the specific sense of “non-white.”

Both of these interpretations express the same fact—that whites do not represent a majority—but the grouping of all non-white populations under the implicitly racial term “minority” (or the explicitly racial term “non-white”) marginalizes those populations, and elides their discrete identities.

As the racial and ethnic makeup of the United States changes, our vocabulary must change with it. Nowhere is this more apparent than in mainstream media, from the Associated Press’s famous “looting” images after Hurricane Katrina to the more recent conversations on treatment of white killers versus Black victims. Language and thinking inform one another, and even more troubling than consciously racist language is unconsciously racist language, so deeply ingrained that it goes unnoticed.

“Minority” is not racist, but is widely used in such a way as to imply racial identity—a nonspecific racial identity that, whatever it may be, is not white. Thus, every racial or ethnic identity is defined in terms of its opposite, underscoring the power dynamic between the dominant identity and all others. Imagine if women were called “non-male,” or Jews “non-Christians,” if LGBT people were “non-straight.” Concrete, specific identity becomes invisible, relegated instead to Otherness. This is not far from a “colorblind” thinking, by which white people claim “not to see” skin color out of a belief in equality, but in doing so ultimately discount the lived experience of people of color as somehow “invisible” to their supposedly enlightened eyes.

The Atlantic’s data on public school enrollment is in line with population trends in the United States more broadly, and its predictions—namely, a steadily diminishing population share of white Americans—are a foregone conclusion. But what language will we have when white students only make up 40 percent of those enrolled public schools? Or 30 percent? As the white population slowly drops below that of racial populations traditionally defined as “minorities,” perhaps the concept of a “default” or “majority” race will disappear entirely. Perhaps. In the meantime, we should take care to combat the marginalization of all racial and ethnic populations, beginning with our choice of words.

Follow John Sherman on Twitter @_john_sherman.

You might also like